Crash Dumps - Analyse Bugcheck and Process Dumps

Download the debugger package that matches YOUR machine's architecture

Set your symbol path

Note

Note

=============================================

Note

Note

How to Analyse Bugcheck and Process Crash Dumps

Download the debugger package that matches YOUR machine's architecture

In other words, if you're running 32-bit Vista, install the 32-bit version of the "Debugging Tools for Windows", irrespective of whether you intend to debug 32-bit or 64-bit code. Likewise for x64 - download and install the x64 package, and you'll still be able to debug 32-bit crashes. Few home and small business users would be running Itaniums, so don't accidentally grab the IA-64 build. "IA-64" (Itanium) is a vastly different architecture to "x64" (AMD64, a.k.a. EM64T when sold by Intel).

Override the default install path and install to c:\debuggers insteadThis is entirely optional but you'll be happier, especially if you intend to do a fair amount of dump gazing. Choose the "custom" install option and use c:\debuggers (or d:\debuggers or whatever other drive) as the install path. It makes it easier to work with the tools and removes that pesky "Program Files" space from the path name. The debugger package is a lot more command-line oriented than many apps nowadays.

Register WinDBG as the default handler for dump filesAnother entirely optional step that makes life easier. By registering WinDBG (the main debugger you'll want to use) as the default handler for common dump file types, you'll be able to just double-click on a dump and have it open in WinDBG without having to go through the "File | Open..." menu operation every time. This registration needs to be performed from an elevated CMD prompt (run CMD as administrator):

C:\>cd debuggers

C:\debuggers>windbg.exe -IA

WinDBG should fire up and pop up a dialog box that says this:

---------------------------

WinDbg:6.11.0001.402 AMD64

---------------------------

WinDbg successfully registered file assocations for .DMP, .HDMP, .MDMP, .KDMP and .WEW.

---------------------------

OK

---------------------------

Set your symbol path

This is NOT optional. In fact, getting it wrong is the #1 reason for frustration when learning to debug. There are several ways to get it right, and this is possibly the simplest:

- Start WinDBG

- WITHOUT opening any dump files, click File, "Symbol File Path..."

- Set the path to be the following:

SRV*C:\SymCache*http://msdl.microsoft.com/download/symbols

It can be any valid local path, c:\Symbols or e:\MySymbols or whatever, but the SRV and http portions must be exactly as above. Don't move the local path around too much though because the idea is to build up a local cache of symbols that minimises your waiting time while symbols are being downloaded from MS. That local cache can grow quite large over time, if you do a lot of dump analysis, and the debugger will be subsequently quicker to open new dumps because of the larger symbols cache.

- Exit WinDBG. It should ask you whether you wish to save workspace settings. "Yes" is almost always the appropriate answer to WinDBG's workspace prompts.

- From now onwards the symbol path should already be set whenever you start WinDBG.

With the correct symbol path configured, whenever the debugger encounters a new binary for which it must find symbols, it will:

1) Check first in C:\SymCache. If a matching symbol is not found...

2) Check whether the symbol is available from the MS symbol server. If it is, copy it down to C:\SymCache so it can be cached for faster future operation.

In practice, the MS symbol server will contain symbols for MS binaries (exe, dll, sys), but not for 3rd-party modules.

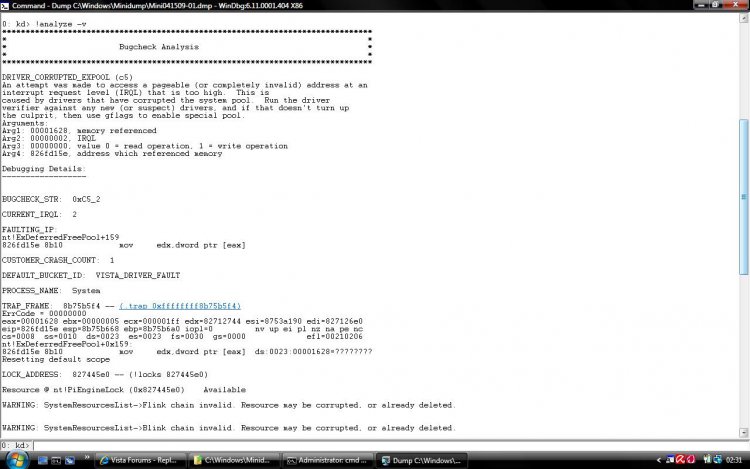

Opening and analysing dumpsOnce you've done the preparatory steps above, you can double-click (if registered) or "File | Open Crash Dump..." in WinDBG to get it to open and analyse a minidump or any other memory dump, including crashes from user-mode processes. If you want to re-invoke its automated analysis engine, use the !ANALYZE -V command. The "v" switch stands for "verbose" - it produces additional detail which may be useful.

The debugger will attempt to find the relevant symbols and then produce a diagnosis, to the best of its automated abilities. Look for a line like this in the output:

Probably caused by : win32k.sys ( win32k!FindTimer+57 )

If the automated diagnosis references a non-default driver, the first task is to update the driver. If that doesn't help and subsequent dumps all still point at the same driver again, try removing it as a test.

In this case, win32k.sys is pinpointed and that is a very important driver which is a part of Windows itself.

When the "probably caused by" verdict points at an OS component, the situation is more complex and minidump analysis is not always sufficient to find the true cause of the crash.

More Debugger Trickery For The InterestedA minidump contains 3 main items of information:

1) The stack of the thread which directly caused the crash. This can be viewed with the various 'k' (stack unwind) commands:

1: kd> kL

Child-SP RetAddr Call Site

fffffa60`09cd0528 fffff800`01cb60ee nt!KeBugCheckEx

fffffa60`09cd0530 fffff800`01cb5abc nt!KiBugCheckDispatch+0x6e

fffffa60`09cd0670 fffff800`01cc96bd nt!KiSystemServiceHandler+0x7c

fffffa60`09cd06b0 fffff800`01cd0cff nt!RtlpExecuteHandlerForException+0xd

fffffa60`09cd06e0 fffff800`01c8dd83 nt!RtlDispatchException+0x22f

fffffa60`09cd0dd0 fffff800`01cb61a9 nt!KiDispatchException+0xc3

fffffa60`09cd13d0 fffff800`01cb4d8d nt!KiExceptionDispatch+0xa9

fffffa60`09cd15b0 fffff960`0011c947 nt!KiGeneralProtectionFault+0xcd

fffffa60`09cd1740 fffff960`00121e2d win32k!FindTimer+0x57

fffffa60`09cd1790 fffff800`01cb5df3 win32k!NtUserKillTimer+0x5d

fffffa60`09cd17d0 00000000`7790c24a nt!KiSystemServiceCopyEnd+0x13

A "stack" is a thread-specific region of memory used for several purposes such as the temporary storage of data, the passing of data between different functions, and for many other more specialised needs such as quick string reversal ("debugger" reversed becomes "reggubed"). The "stack unwind" shown above reveals that the nt!KeBugCheckEx (blue screen) function was called by nt!KiBugCheckDispatch, which was in turn called by nt!KiSystemServiceHandler, and so on downwards. Therefore, the specific stack at the time of the crash can be likened to a crash "signature" of sorts.

2) The processor register context of that thread:

1: kd> r

rax=fffffa6009cd0630 rbx=fffffa6009cd17d0 rcx=000000000000003b

rdx=00000000c0000005 rsi=fffff80001cb5df3 rdi=fffff80001e64df4

rip=fffff80001cb6350 rsp=fffffa6009cd0528 rbp=fffffa6009cd1508

r8=fffff9600011c947 r9=fffffa6009cd0ee0 r10=0000000000000000

r11=0000000000000001 r12=fffffa6009cc4000 r13=fffffa6009cd4000

r14=fffff80001c61000 r15=fffff80001daf4ec

iopl=0 nv up ei ng nz na po nc

cs=0010 ss=0018 ds=002b es=002b fs=0053 gs=002b efl=00000286

nt!KeBugCheckEx:

fffff800`01cb6350 48894c2408 mov qword ptr [rsp+8],rcx ss:0018:fffffa60`09cd0530=000000000000003b

Registers can be thought of as the fastest memory available to the processor. IA-32 (x86) and AMD64 (x64) both provide "general purpose" and specialised registers, although AMD64 is an extension of the simpler IA-32 architecture. Note that "registers" are not in any direct way related to the "registry", despite the name similarity.

In the example above, the 64-bit Instruction Pointer register (RIP) which contains the address of the next instruction to execute was in nt!KeBugCheckEx at the time of the crash, which is not surprising given that's the "bluescreen" function.

3) A list of loaded modules at the time of the crash. The 'lm' (list modules) command can show these:

1: kd> lm

start end module name

fffff800`01c1b000 fffff800`01c61000 hal (deferred)

fffff800`01c61000 fffff800`02179000 nt (pdb symbols) c:\symcache\ntkrnlmp.pdb\149C563625CA49CEA2881CEDF5D55CCF2\ntkrnlmp.pdb

fffff960`00050000 fffff960`00301000 win32k (pdb symbols) c:\symcache\win32k.pdb\97A727330C184A9B9E1BDA0C3293AA142\win32k.pdb

fffff960`00410000 fffff960`0041a000 TSDDD (deferred)

fffff960`00620000 fffff960`00631000 cdd (deferred)

...

In the module listing above, the debugger has only encountered "nt" (NTOSKRNL itself) and win32k.sys code in the stack, which is why it has downloaded symbols (they have a PDB extension) from the MS symbol server for those two binaries, but not for hal.dll, TSDDD.dll, and cdd.dll. Their symbol status is listed as "deferred".

Even More Debugger Trickery For The Really InterestedMinidumps can also easily reveal basic information about the Windows version, service pack level, time of the crash, and system uptime:

1: kd> vertarget

Windows Server 2008/Windows Vista Kernel Version 6001 (Service Pack 1) MP (4 procs) Free x64

Product: WinNt, suite: TerminalServer SingleUserTS

Built by: 6001.18145.amd64fre.vistasp1_gdr.080917-1612

Machine Name:

Kernel base = 0xfffff800`01c61000 PsLoadedModuleList = 0xfffff800`01e26db0

Debug session time: Thu Apr 2 07:07:55.076 2009 (GMT+11)

System Uptime: 0 days 0:02:00.122

Say you've identified a driver that you believe to be responsible, and now you want to see more particulars about that binary. Use 'lmvm' with the module name:

1: kd> lmvm tdrpm147

start end module name

fffffa60`0140c000 fffffa60`01590000 tdrpm147 (deferred)

Image path: \SystemRoot\system32\DRIVERS\tdrpm147.sys

Image name: tdrpm147.sys

Timestamp: Mon Oct 13 21:14:16 2008 (48F31F78)

CheckSum: 0018472C

ImageSize: 00184000

Translations: 0000.04b0 0000.04e4 0409.04b0 0409.04e4

To get more info on the processor(s):

1: kd> !cpuinfo

CP F/M/S Manufacturer MHz PRCB Signature MSR 8B Signature Features

1 6,15,11 GenuineIntel 2405 000000b600000000 20193ffe

Cached Update Signature 000000b600000000

Initial Update Signature 000000b600000000

To see the writeup for any given bugcheck code:

1: kd> !analyze -show D1

DRIVER_IRQL_NOT_LESS_OR_EQUAL (d1)

An attempt was made to access a pageable (or completely invalid) address at an

interrupt request level (IRQL) that is too high. This is usually

caused by drivers using improper addresses.

If kernel debugger is available get stack backtrace.

Arguments:

Arg1: 0000000000000000, memory referenced

Arg2: 0000000000000000, IRQL

Arg3: 0000000000000000, value 0 = read operation, 1 = write operation

Arg4: 0000000000000000, address which referenced memory

And the most useful command of all - it brings up the debugger's comprehensive help document (debuggers.chm):

1: kd> .hh

=============================================

Full dumps allow for much more meaningful analysis but they're hundreds of MB or even several GB in size, depending on the dump type, and for a 2-minute look at someone's BSoD problem a minidump is perfectly sufficient. If their system is crashing frequently and the dumps all point at the same 3rd-party driver - bingo. Otherwise, if virtually every dump points at a different culprit and they mostly look inscrutable, chances are it's a hardware issue or something which would require more advanced analysis methods.

Attachments

Last edited by a moderator: